Abstract

Learning to autonomously assemble shapes is a crucial skill for many

robotic applications.

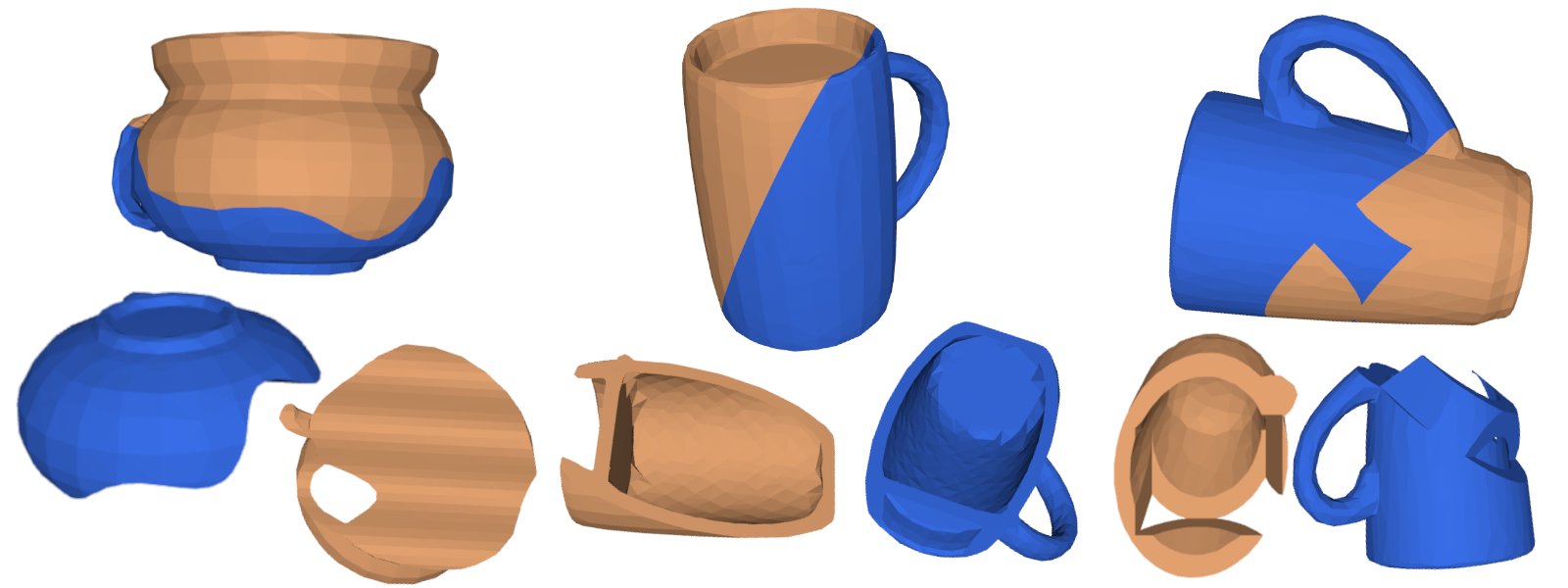

While the majority of existing part assembly methods focus on correctly

posing semantic parts to recreate a whole object, we interpret assembly

more literally: as mating geometric parts together to achieve a snug fit.

By focusing on shape alignment rather than semantic cues, we can achieve

across category generalization and scaling.

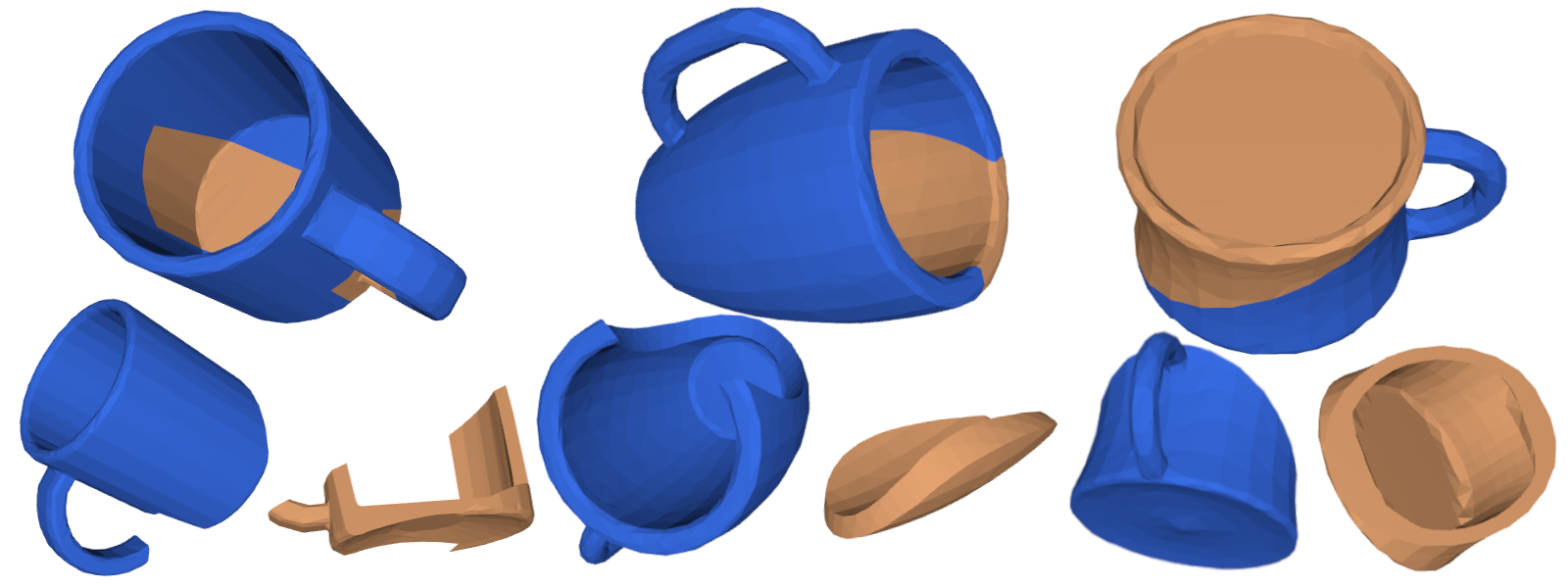

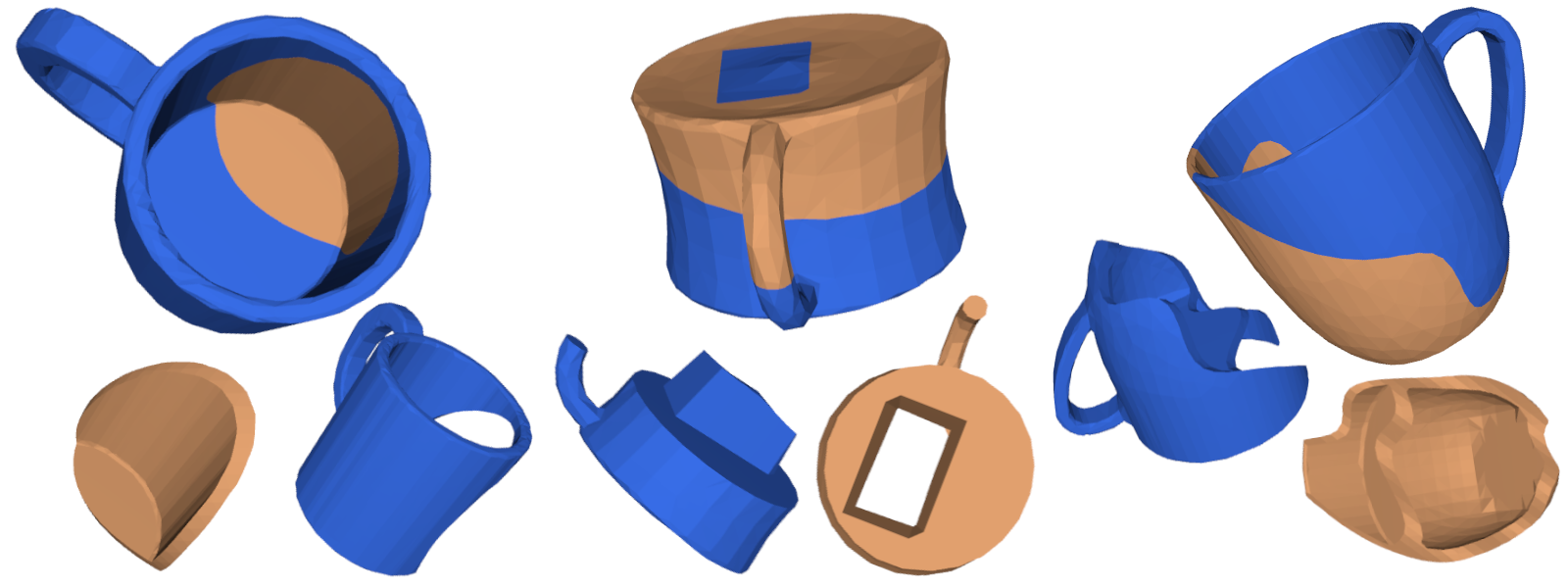

In this paper, we introduce a novel task, pairwise 3D geometric shape

mating, and propose Neural Shape Mating (NSM) to tackle this problem.

Given point clouds of two object parts of an unknown category, NSM

learns to reason about the fit of the two parts and predict a pair

of 3D poses that tightly mate them together.

In addition, we couple the training of NSM with an implicit shape

reconstruction task, making NSM more robust to imperfect point cloud observations.

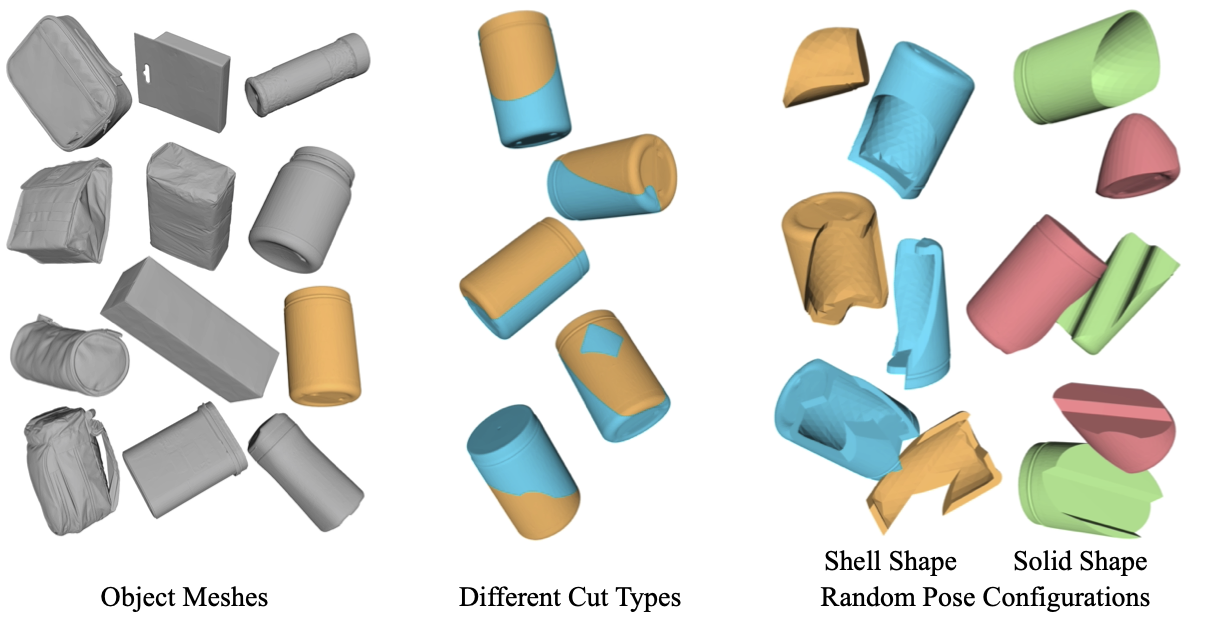

To train NSM, we present a self-supervised data collection pipeline

that generates pairwise shape mating data with ground truth by randomly

cutting an object mesh into two parts, resulting in a dataset that consists of 200K shape mating

pairs

with numerous object meshes and diverse cut types.

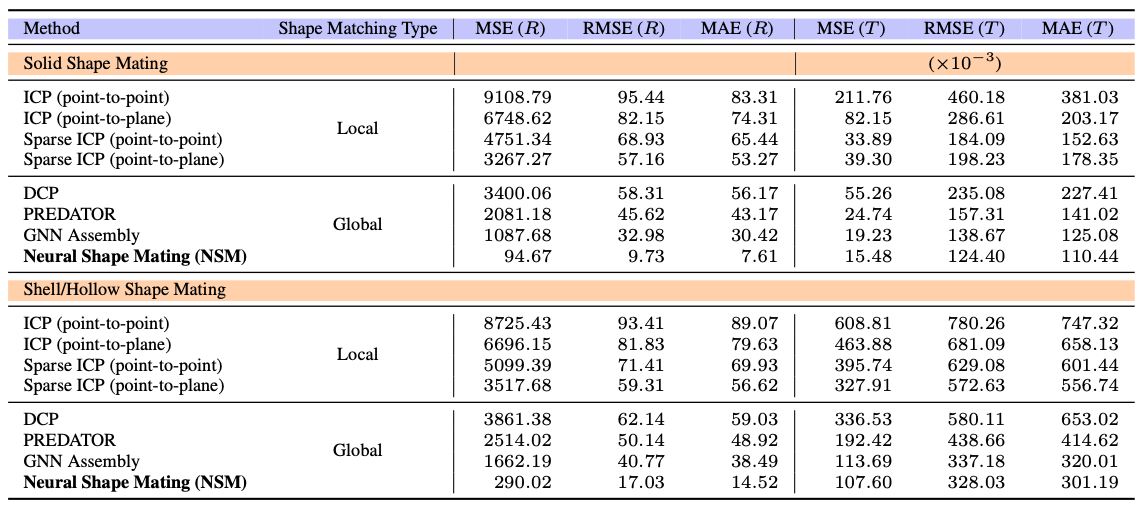

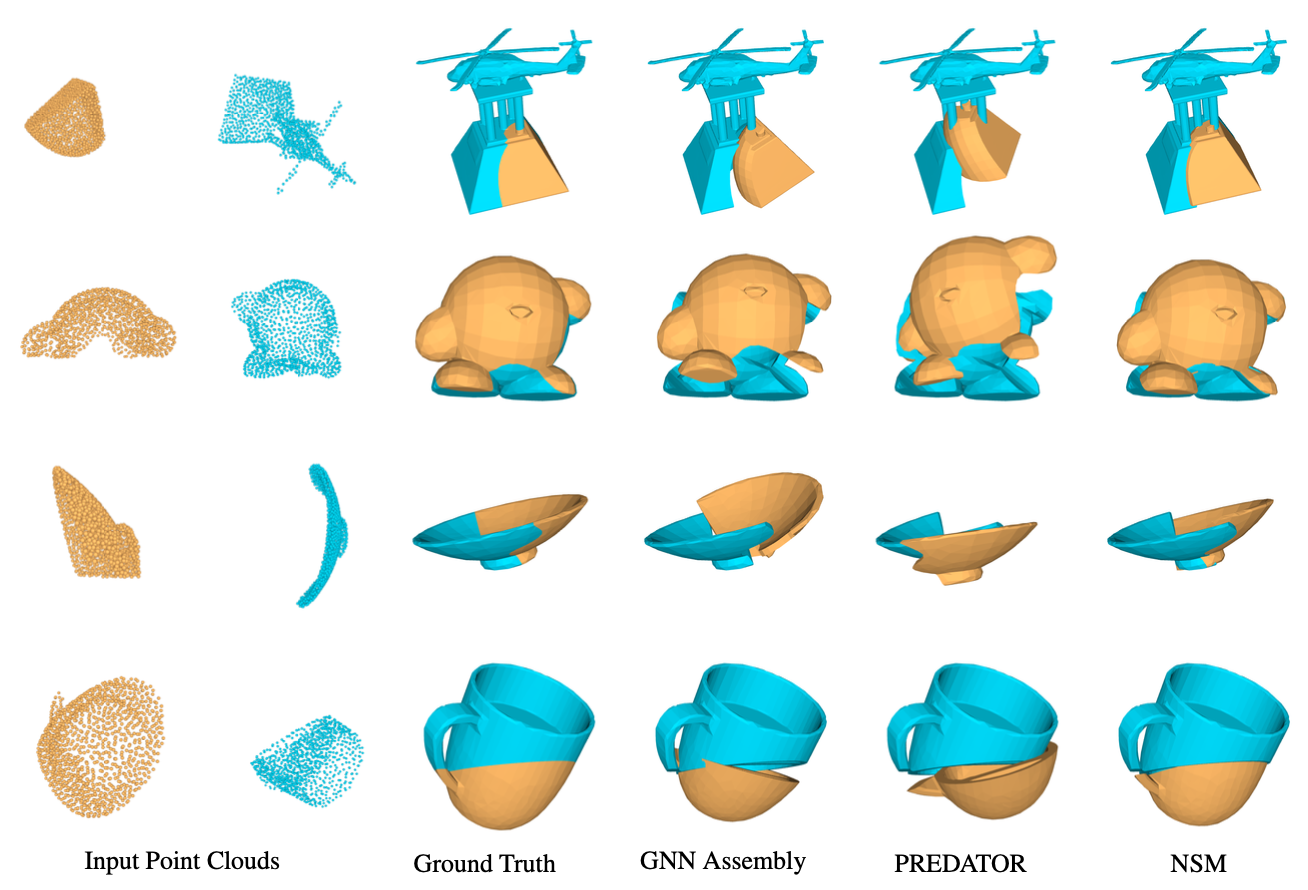

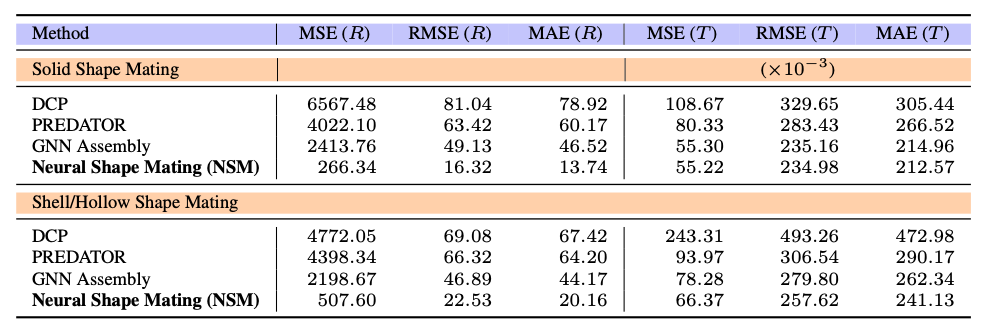

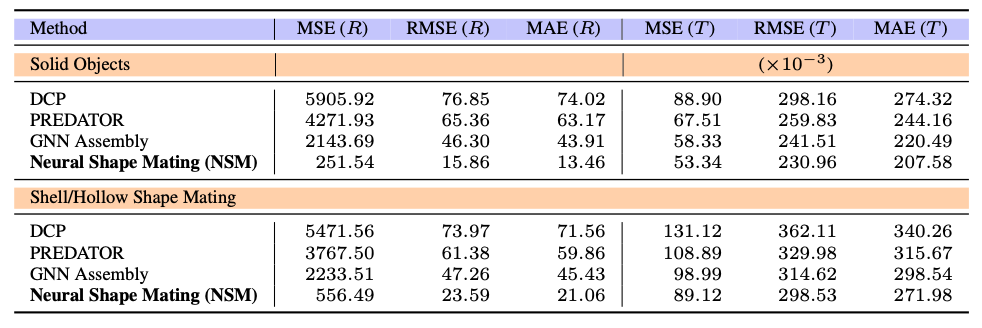

We train NSM on the collected dataset and compare it with several point

cloud registration methods and one part assembly baseline approach.

Extensive experimental results and ablation studies under various

settings demonstrate the effectiveness of the proposed algorithm.